How to Block GitHub Copilot

Github Copilot can put the IP rights of your code at risk. You may want to block engineers from using it.

What is GitHub Copilot?

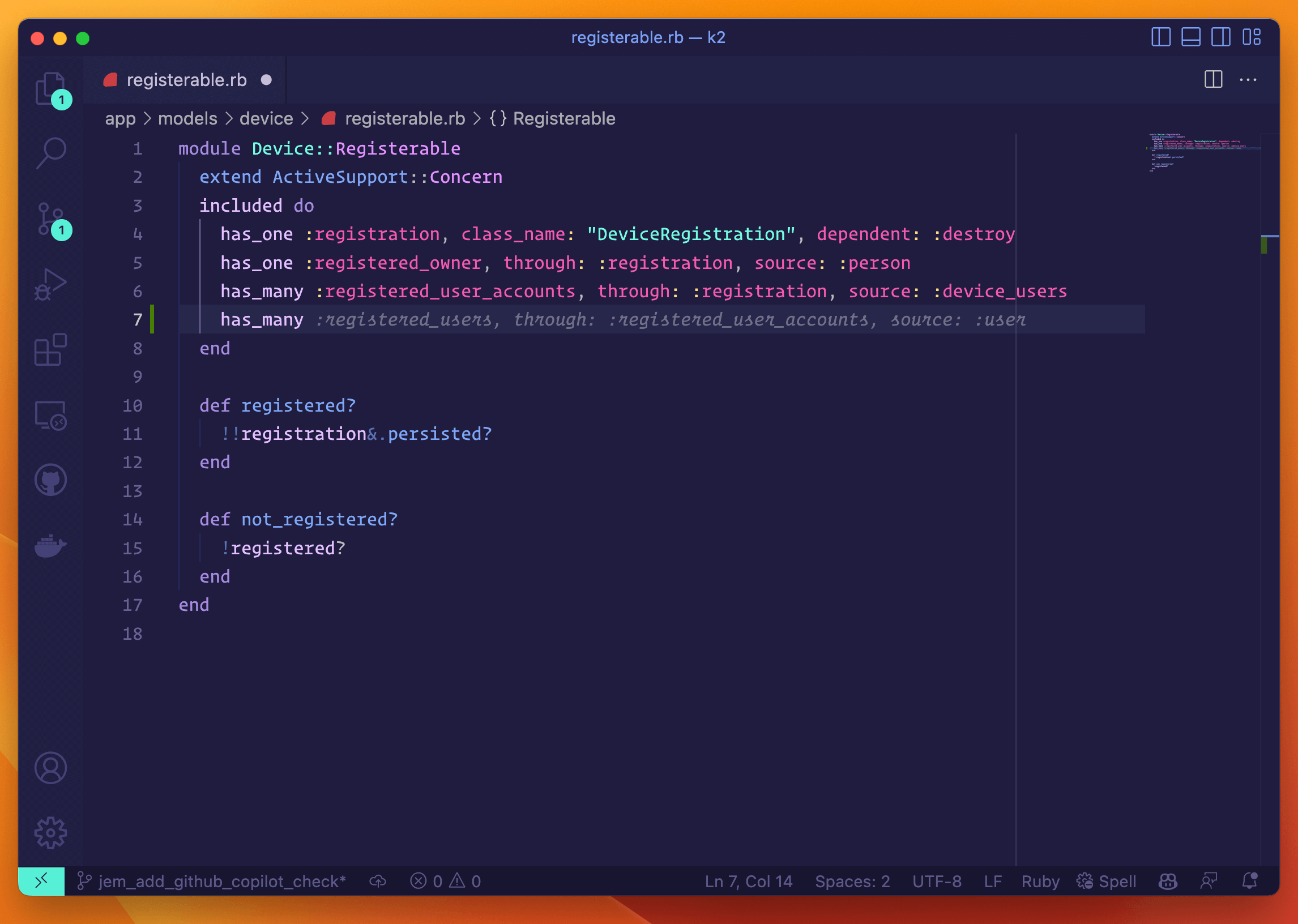

Github Copilot is an AI-powered service that integrates with popular code editors and IDEs. Once installed and activated, Github Copilot continuously monitors the source code a developer is writing and provides autocomplete style suggestions in real time.

Should Organizations Block GitHub Copilot?

Many engineers have reported very positive experiences using GitHub Copilot and may already be highly dependent on its suggestions. Despite its usefulness, there are several reasons you may want to consider blocking it.

Copilot Suggests Code That You Don’t Have License To Use

As we discussed in our blog, it has been recently alleged that GitHub Copilot suggests code that is indistinguishable from open source code. Code under certain open source licenses (“copyleft” licenses) is not allowed to be copied without proper attribution, and in some cases, may relicense all adjacent code under an undesirable license (ex: GPL or similar).

If your organization considers its code valuable intellectual property, you may wish to discourage the use of GitHub Copilot until active and likely precedent-setting litigation is resolved.

Copilot’s Suggestions Contain Bugs And Security Vulnerabilities

GitHub makes no guarantees that code Copilot suggests is free of bugs or security vulnerabilities. The onus is on software engineers to evaluate Copilot’s suggestions as they would for code they discovered on a blog.

While it’s true that developers are used to evaluating code from a third-party source, one important distinction is the sheer quantity of suggestions GitHub Copilot supplies. When using Copilot it’s not unusual to receive a suggestion each time you press the enter key to start a new line of code. This could mean hundreds of suggestions for a single file Engineers, potentially overwhelmed by the quantity of suggestions, may not apply the same rigorous analysis as they would to code they occasionally source from blogs and community forums.

In our blog post about GitHub Copilot, we reference a paper that analyzed Copilot’s suggestions shortly after the service was launched. From the paper’s abstract (emphasis ours)

We explore Copilot’s performance on three distinct code generation axes examining how it performs given diversity of weaknesses, diversity of prompts, and diversity of domains. In total, we produce 89 different scenarios for Copilot to complete, producing 1,689 programs. Of these, we found approximately 40 % to be vulnerable.

Since it’s not possible for a reviewer to know which code was suggested from GitHub Copilot and which code was written by the engineer, any productivity gains derived from Copilot may be undone by the increased amount of rigor required during review to ensure vulnerable code hasn’t slipped in.

How Do I Block GitHub Copilot?

Currently, it is not possible to control Copilot settings for GitHub accounts associated with your organization. This may change soon, since recently, the company announced that it plans to launch, “Copilot For Business.” Representatives from GitHub state: “Soon, businesses will be able to purchase and manage seat licenses for GitHub Copilot for their employees. They’ll get added admin controls for various GitHub Copilot settings on behalf of their organization.”

Hopefully, once available, this will be the safest and easiest way to ensure GitHub copilot cannot operate on employee devices with access to company code.

In the meantime, we’ve developed a Check so you can see if Copilot is already in use at your organization.

Detecting GitHub Copilot

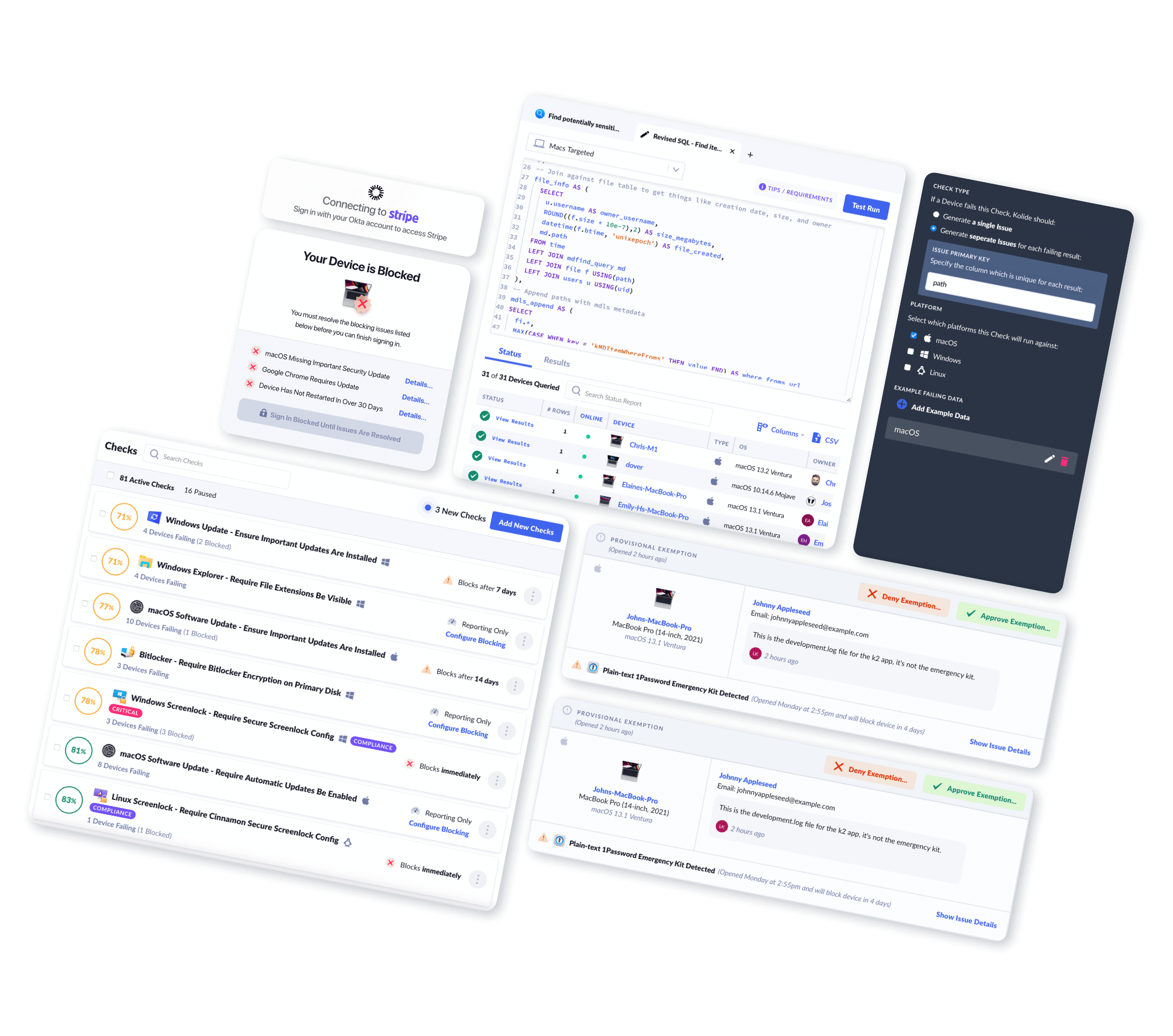

Kolide uses its agent to detect the presence of the GitHub Copilot plugin from within VSCode, Microsoft Visual Studio, neovim, and Jetbrains IDEs (ex: RubyMine). In addition to GitHub Copilot, Kolide is able to enumerate all VSCode Extensions.

How Does Kolide Remediate This Problem?

This problem cannot be remediated through traditional automation with tools like an MDM. You need to be able to stop devices that fail this check form authenticating to your SaaS apps and then give end-users precise instructions on how to unblock their device.

Kolide's Okta Integration does exactly that. Onece integrated in your sign-in flow, Kolide will automatically associate devices with your users' Okta identities. From there, it can block any device that exhibits this problem and then provide the user, step-by-step instructions on how to fix it. Once fixed, Kolide immediately unblocks their device. Watch a demo to find out more.